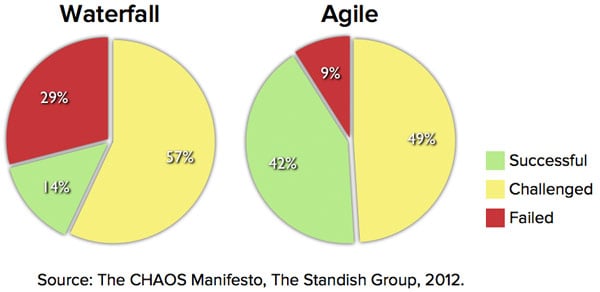

Agile projects are successful three times more often than non-agile projects, according to the 2011 CHAOS report from the Standish Group. The report goes so far as to say, "The agile process is the universal remedy for software development project failure. Software applications developed through the agile process have three times the success rate of the traditional waterfall method and a much lower percentage of time and cost overruns." (page 25) The Standish Group defines project success as on time, on budget, and with all planned features. They do not report how many projects are in their database but say that the results are from projects conducted from 2002 through 2010. The following graph shows the specific results reported.

Last update: April 25th, 2023